Many teams use AI to generate code, it’s great at spawning lots of code quickly, so it seems like a productivity boost, but is the code good? is it working? How about your technical debt, is it growing proportionally with the newly generated code? Some teams tackle that by guard-railing what AI does with human code reviews. Some are starting to use AI also for the code review phase. Antropic recently offered a 25$-per-merge code review agent… so, what’s the smart way to use AI for code reviews? read on, the answer is here

What can AI do for your Code Review Process?

You’ve decided to use AI to perform code review for you. Whether the actual code is hand generated or AI generated, code review has different rules altogether. You create an agent and instruct it to review the code, you even give it your favorite checklist and ask it to be ‘strict’.

Then you hit the wall everyone hits, which is “how can I tell whether this code review agent is effective?”

The first thing to realize is that in order to use an agent for code review, you must give it a measure of success, you need to be able to measure it’s effectiveness, otherwise, it’ll work, burn tokens, do something, but you will never be sure if it’s actually effective.

The problem at stake is a subset of the general problem of “how can we tell if a code review is effective”? when asked that, most teams would describe their process, what they do, the importance they assign to it, the seniority of the individuals participating, the extensiveness of the checklist and how well it is integrated into their workflow… all of this is process, it has nothing to do with result, it says nothing about effectiveness.

In order to consider code review effectiveness one has to consider what this phase is for. It is a quality gate in the software development lifecycle (SDLC) and as such needs to catch, stop, identify, block, some issues from advancing to later phases in the SDLC. Once you realize that, you need to start looking at code review escapes.

A code review escape is a bug, defect, problem, customer escalation, issue, that was found sometime after the code review (usually in testing or production) and could have been identified in the code review phase, if the code review had been done in a more thorough way. The ratio of those escapes out of all of your problems, is your code review escape ratio.

Now comes the fun part. When you know how to identify a code review escape, you can update your code review process to catch these types of issues on a on-going basis. You close the loophole through which this problem escaped. This is where humans are not a perfect fit for the task. It is hard for humans to go over all issues found in a software product and reverse engineer what the code review process should have been to identify future issues. In the best case scenario, the best teams, perform root cause analysis (RCA) and try to use it to train the reviews to look for patterns. Even when this is done, and even when escape ratios are being measured continuously, the actual improvement is quite limited.

Back to AI (this is what we’re here for). AI is great at digesting large amounts of data and finding patterns. This is the perfect use for our code review AI agent. It has a very powerful potential to become superior in catching issues up front if we allow it to learn from the found problems.

And so, to close the loop. Feed your code review AI agent with the infinite stream of previously-found problems and newly-found problems and ask it to read the content, understand the root causes and improve its own ability to find these type of issues in new code that’s being sent to it for review. That is the best way to use the power of AI to generate capabilities humans can’t achieve.

Try it, tell me how it works for you!

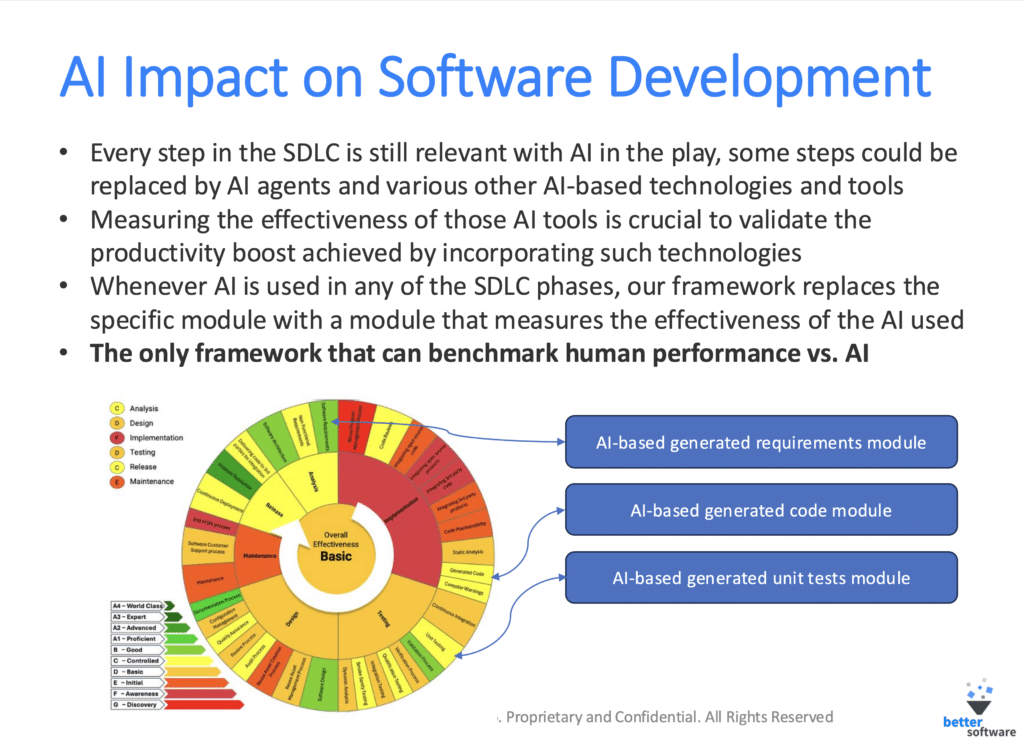

Advance material: using AI for code review, requires measuring code review effectiveness. That is a subset of the pattern: “to use AI in any phase of the SDLC, you need to measure the effectiveness of that SDLC phase”. To date, there is only one framework (yes, it’s ours) that can do that…

The Smart Way

AI Can do a lot to improve your team’s code review process. We’ve seen great examples of it

The answer is, as always, a bit complex. You have to measure code review effectiveness and feed your AI reviewer with defect streams and ask it to improve.

Once you do that, start experimenting and enjoy the ride!